“Updating priors” has become one of those tropey phrases that more and more people say, like “thinking from first principles”. Poker bros started it. Finance bros popularized it. Now tech bros, who are all by default LLM bros, are spreading it more, if only to signal that they know at least something about how backpropagation works.

I am as guilty of doing this as any.

But “updating priors” is a good principle to live by, even though in practice, it requires energy, humility, and commitment to finding the “ground truth” (another tropey phrase). It is especially hard to pull off on topics that other people think you know something about. That’s how I feel about AI in China, or “ChinAI”, as Jeff Ding’s inimitable and namesake newsletter calls it. “ChinAI” is a subject matter I treat with much humility, because China is moving fast, AI is also moving fast, and combined is moving faster than the sum of its parts. (Timestamping the title of a post on this subject is the least I can do.)

I recently had the good fortune to exercise this humility with a group of sharp, smart, and open-minded AI writers and researchers from the US and the UK. Some are China rookies, others are China veterans, I put myself in the middle of that spectrum. We spent nine days together, traversed four cities, and interacted with as many Chinese AI researchers from leading labs on the “ground” to discover some “truth”.

The trip was inadvertently well-timed. DeepSeek dropped its highly-anticipated V4 model on the day that most of us landed. The Meta-Manus acquisition blow up happened on day one of our itinerary. The Trump-Xi summit, where AI appears to be a key agenda item, was just around the corner.

Over the course of our trip, we chatted with researchers from almost all the leading AI labs in China – roughly 85% of that universe by my own estimation. Some were done in meetings inside large conference rooms that made us look like foreign dignitaries. Some happened more informally over lunches with Domino’s Pizza or dinners around a lazy susan. And some were quick chats in hotel lobbies, in order to fit something in between our hectic travel schedule and their more hectic work schedule. They’ve all got AGIs to build!

These are the labs that I personally got to spend some time with: Moonshot AI (Kimi), Tsinghua AIR (Institute for AI Industry Research), 01.ai (Kaifu Lee’s AI lab), Z.ai, DeepSeek, Mimo (Xiaomi’s AI lab), Inclusion AI (Ant Group’s AI lab), Alibaba Cloud, MiniMax, RWKV, Seed (ByteDance).

This post is my attempt to thematically sum up the most interesting takeaways from this trip that meaningfully changed some of my priors or reinforced other intuitions. I categorize them as either "Commonalities” among the Chinese labs or “Differences” as in points of divergence that revealed more nuances and less uniformity. Because so much of the conversations happened informally in private settings, these takeaways will not be attributed to any labs explicitly, but presented as composite observations.

Commonalities

Cracked Interns

Our group’s intended focus was to talk to core researchers. And we got our wish for the most part. We met with many talented researchers, many of whom held the vaulted title of “interns”. This is for good reasons, because most of them are still PhD students. Except these internships are one year, sometimes two years long. They are also treated as full-time employees with full data, compute, and systems access to generate ideas and run experiments.

These “cracked interns” are the workhorses of China’s leading labs. The average age is in the mid-20s – whip smart computer scientists born around or after the turn of the century. They are terminally online (especially on X/Twitter) and can converse in English without much struggle on technical topics. Most of them hail from Chinese universities and have not studied abroad.

This stands in stark contrast to the lack of internship opportunities offered by leading western AI labs and the lack of “real work” an intern is even allowed to do if offered a spot, an observation that Nathan Lambert (and fellow traveler) shared in one of his posts about the trip. When we asked the labs about the underlying reasons, the explanation was ruthlessly practical.

For one, the labs don’t see more senior people, be it professors or PhD graduates with deep industry experience, as a good source of research talent, because the field of LLM and generative AI is too new and moving too fast. There is a premium placed on raw brain power and fresh minds swirling with new ideas from first principles (oops, I did it again). Less weight is assigned to track record and experience in adjacent AI disciplines. More than one lab has told me that going to academic conferences lately to scout for ideas has been a waste of time. The typical conference’s submission and admission schedule is way too slow to reflect the latest frontier AI research.

For another, coming from the PhD advisors’ point of view, there are simply not enough compute resources inside any university to allow the ideas of talented students to flourish and publish. Thus, seconding their students inside the labs equipped with more compute, while knowing the labs would allow their students to publish papers in partnership with their universities, is a win-win proposition. The prefill-as-a-service paper, a personal favorite of late, is an example of a joint production between Moonshot AI and Tsinghua University.

There is a symbiotic chemistry and understanding between the academic institutions and the AI labs – the former filters for raw talent, the latter makes good use of them. Strategically, this is an important observation, as this symbiosis may pave the way for a stronger homegrown AI talent pipeline in the long run.

No Philosopher Kings

Among these twenty-something “cracked interns”, we found few who did much philosophizing about the societal implications and ramifications of what they were building day and night. When we asked them how they define AGI, more than a few researchers from different labs coincidentally answered in exactly the same way: “AGI is when the AI can replace me!”

There wasn’t much hint of worry or melancholy in the tone of their answer either. Instead of fearing to be replaced, they sparkled in wonderment as to whether a machine can indeed be built to be more capable than its maker. And if that is accomplished one day, then they would happily move on to doing something else.

This is another contrast to their western counterparts, many of whom actively worry about AI’s safety or societal implications privately. They also spent time philosophizing about these issues on X, on podcasts, or even engaging with lawmakers to lobby for their preferred policy outcomes. There are no equivalent "philosopher kings” in the Chinese AI ecosystem. It is instead populated by researchers who prefer to focus on just building the tech with, as fellow traveler Afra Wang elegantly coined, “monastic intensity”.

You can chalk this up to either youthful idealism or pure naïveté. My interpretation has a more system-level bent. When my fellow travelers asked some of the researchers about AI safety, they did share a common (and common sense) belief and commitment in making AI safe – everyone believes AI should not do bad things. But ensuring that outcome is something that the government, their government, should and can figure out.

This division of role mentality, quintessentially Confucian in some ways, is in my view a product of the system they live in. The philosopher kings of the west live in participatory democracies where there is a higher degree of personal agency. If AI’s societal impact is a big personal issue for you, and you work in AI research and can give voice to that issue, there are many productive avenues to act on those impulses. Similar avenues simply do not exist in the Chinese system of governance for the rank-and-file researchers, so why waste your time and energy?

Plus, these Chinese researchers have too much work in front of them, made worse by the cards they are dealt.

Less Compute But Still More Research

By cards dealt, I don’t necessarily mean the system of government they are born into. Compute constraint, or lack of GPU cards (yes those cards), made irreparably worse by US export control is the most immediate obstacle to scale.

This US-policy-led compute constraint and its impact is all too predictable and should not surprise anyone. I foresaw this outcome 18 months ago when it was obvious then as it is now that compute scaling is nowhere near hitting a wall. Don’t let anyone convince you otherwise. Most of these labs are still running on the NVIDIA chips they were able to procure before the “yard” got bigger. Some are renting capacity from different hyperscalers, which is still legal and above board. Some, perhaps, are making use of smuggled chips, though we did not challenge our hosts on this front when the fact patterns have been laid bare (we had no interest in being naughty guests).

What was more telling was how Chinese labs were making use of their limited resources to still support core R&D to improve model intelligence or increase efficiency and reduce cost. Some labs are splitting their total compute resources by a 3:1:1 ratio – as in 3 GPUs for research, 1 for pre-training, 1 for post-training. Others privately shared that they allocate about half of their total resources to research, the rest to other types of workloads.

The precise proportion is less important than the direction of travel. Devoting more resources to research and experimentation makes sense, especially given the compute constraints, when yolo’ing on a big training run is infinitely more costly. Spending considerable compute to try, test, toss aside, or incorporate ideas that work, however small and incremental, increases the odds of success of an eventual large-scale training run, of which most Chinese labs simply can’t afford to run too many. Since core research tends to consume most of the resources to build any new model, guarding precious GPUs for research is the smart strategy given the constraints.

During one of our meetings, in the spirit of reciprocity after pumping our host for information for close to two hours, we shared with the Chinese researchers the rough GPUs per researcher ratio inside OpenAI. Their jaws literally dropped when they heard the number. Yet, we all know the OpenAI researchers, or every researcher in every western AI lab under the sun, would still complain about how little compute they have.

In so many ways, AI researchers in Silicon Valley don't know how good they have it. Meanwhile, their Chinese counterparts are forging ahead with what little resources they have and playing for the best outcome with the cards they are dealt.

Differences

On any topic related to ChinAI, the differences are oftentimes more scintillating and useful than the commonalities. Even within the ecosystem of AI labs, I noticed divergence on some important dimensions.

Open vs Closed Spectrum

One divergence is the attitude and approach towards open versus closed models. This flies in the face of the consensus that emerged from last year, when the dominance and proliferation of open models from China was a major trend, earning the accolades of grassroots developers overseas and leaders at home, all the way up to the top. (Premier Li Qiang gave a shoutout to “open source” during his address at the Two Sessions in March.) Not to be left out, the powerful voices of Washington, DC, and the policy brains of leading American labs that feed those voices, also threw lots of shade at Chinese open models as the source of much AI evil.

This widespread recognition and infamy from all sides gave the impression that Chinese labs have to open source their models to appease their country’s leaders as an instrument of some grand geopolitical strategy. The ground truth tells a different story.

Depending on the lab’s financial situation and revenue pressure, a split is emerging on whether to keep open sourcing models and where to draw the line. This line is becoming clear: the one trillion parameter model size.

For some labs, releasing an open model that is one trillion parameters or larger is a waste of resources because no one can run a model that large on a local machine – a typical open model use case. The better way to release models that are at or larger than this threshold is to package them behind an API hosted, conveniently, on the lab’s own cloud infrastructure with a consumption meter and a place to enter your credit card information.

For other labs, committing to building open models is a quasi-religion, while being able to do so at the one trillion parameter level is the price of admission. (See my earlier piece on the history of open source in China to see where this commitment came from.) If you can’t get to one trillion and beyond, you no longer deserve a seat at the table. Granted, the labs who conveyed this message enjoy the unique comfort of sitting inside a much larger conglomerate with many cash cows to keep the lights on and the chips humming.

During one of our meetings, when the question of open source came up, the leader of the lab in his most tiger-dad-like mannerism voiced his commitment to open source, because he wanted to keep his researchers honest, while his researchers looked on stoically. (“You said your model is good? Open source it and prove it to the community!”) In other conversations, the one lab that researchers feared the most from a competitive lens is Seed from ByteDance – ironically the one lab that is proudly closed source.

The diversity in opinion and execution in this open vs closed spectrum, I suspect, will only widen as this year chugs along. The divergence I witnessed on this trip is just the beginning.

“Western” vs “Chinese” Personality

Another dimension that these leading Chinese labs are diverging on is their corporate personality, if there is such a thing.

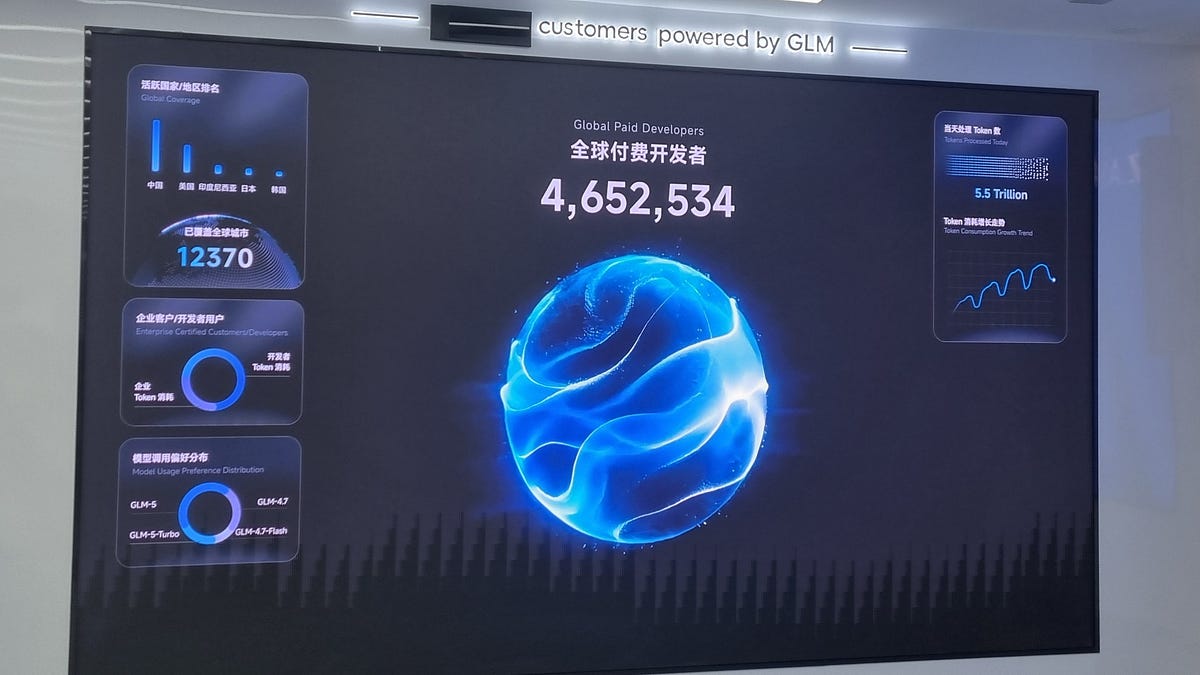

Some are trending stereotypically “Western”, where showcasing a cool, Silicon Valley vibe is being lived, breathed, and delivered even in the kind of swags they gifted us. This is likely both a conscious choice, traceable to the life story of the founder, and a business necessity dictated by the kind of customers – AI native, globally minded, tech forward – that the lab wants to attract.

Some are becoming rather “Chinese”, where building a shiny showroom as the first thing you see after the reception desk – worthy of both the CEO of a state-owned enterprise and cadres from the local party office – is of P0 priority. Again, I think, this is both a choice and a necessity that stem from the background of the founder and the type of business the lab chooses to pursue.

The way each lab carried itself in front of our motley crew of AI writers and researchers revealed this personality split in subtle but meaningful ways. What intrigues me is that regardless of whether a lab chooses to be more “Western” or more “Chinese”, the aim isn’t just about winning the home market, but also (and always) to expand overseas. And both personalities could work!

Why a “Western” vibe would work in a “going global” strategy is rather obvious. What isn’t obvious is why a “Chinese” personality could also work, especially when you are going after the wallets of old, legacy enterprises in the Global South. One of the labs, who embodied this “Chinese” identity, shared with us that they are doing business with a large chicken and eggs farm in a Global South country. To earn more of these kinds of businesses, they go directly to the CEOs of backwater yet large industries to push their AI product from the top-down – choosing to go where their fancier Silicon Valley counterparts are too high-browed to touch.

CEOs and shotcallers of most ilk actually love an entourage shepherding him around a shiny showroom, then getting wined, dined, and pampered before signing a purchase order. The tech native bosses, who hate talking to sales and marketing people are the exceptions, not the norm, in most business dealings. That’s why even OpenAI is now corralling a group of private equity funds, consulting firms, and system integrators to help with the unglamorous push for enterprise adoption with a so-called Deployment Company.

Top-down force-feeding still works like a charm in AI. And what could sound more stereotypically “Chinese” than that!

Friends Along the Way

As the world barrels towards a Trump-Xi summit this week, potentially to be followed by three more such occasions in the remainder of this year, it is hard to not feel a touch of anxiety over how these two AI superpowers would manage the risks and uncertainties of an inherently uncertain, probabilistic piece of technology.

Yet, if you practice mainstream consensus cleansing and narrative detoxing, as I regularly try to do, then things really aren’t that bad. Hanging out with researchers and chitchatting with the people doing the work is a good way to do this. You pick up on the humanity of the technology more rather than over-index on the abstraction of its hypothetical power.

That humanity comes through in seeing harsh realities. One researcher had a running fever yet felt obligated to entertain and engage with us for two days, while others worked overtime during a national holiday and likely slept on cots in the office hallway. It also comes through in joking asides and self-deprecating humor. One researcher from Moonshot AI half-seriously told us that AGI is the “friends you make along the way”. While inside Z.ai’s shiny showroom next to its reception desk, one display screen told all visitors in no uncertain terms that its journey to AGI is currently, and always will be, at 42%. (This is in reference to the number “42” in the Hitchhiker's Guide to the Galaxy that is a familiar cult meme among all techies.)

The United States of America and the People's Republic of China – as two abstractions of national identity, governing ideology, and technological modernity – may never become friends in my lifetime. But on this trip at least, I witnessed friendships born and mutual respect formed between ordinary researchers, who subscribe to these two different identities, comply with these two different ideologies, yet still want to build and participate in a modernity that AI empowers, together.

As a humanist at heart, that is good enough for me.

Gratitude

I would like to share a special note of gratitude to my lovely wife, who took care of our baby boy on her own for almost two weeks, so I could go on this trip. The energy that goes into “updating priors” isn’t just an individual’s commitment, but takes a loving partner and a supportive village to actualize. Forever grateful for her support!

Thank you to all the labs who made time for us, despite holidays, work schedules, and taking time away from your own family. Thank you to Caithrin for his herculean effort in organizing this journey.

As far as friends along the way are concerned, I could not have traveled with a more thoughtful, engaging, and friendly group of people. Here is a list of their writing on the trip, which I encourage you to read to paint a fuller picture of the experience: